By ARIEL MALIK

Australia has a healthy instinct for cutting through noise. We love innovation, but we trust what performs under pressure. And right now, the most important conversation in AI is not only about smarter algorithms or bigger chips. It is about speed. More specifically, the speed and reliability of data moving through systems in real time.

I’m ARIEL MALIK, and from where I stand, the next bottleneck in AI is already clear. When data arrives faster than infrastructure can react, AI becomes delayed. And delayed AI is not intelligence, it is hindsight.

The real constraint is data flow

In live environments, AI does not work in neat batches. It works in streams. Signals arrive from users, devices, sensors, transactions, and networks, all at once. Many systems still rely on buffering and queuing, which creates unpredictable latency, especially under heavy load.

In Australia, that is not a small issue. Our operations are distributed, our industries are real-world, and performance needs to stay stable across distance and scale. Predictable milliseconds matter in mining, logistics, utilities, finance, and security. When systems lag, the cost is operational.

As I often say, the value of AI is measured in the moment it can act.

Why Data Acceleration is becoming essential

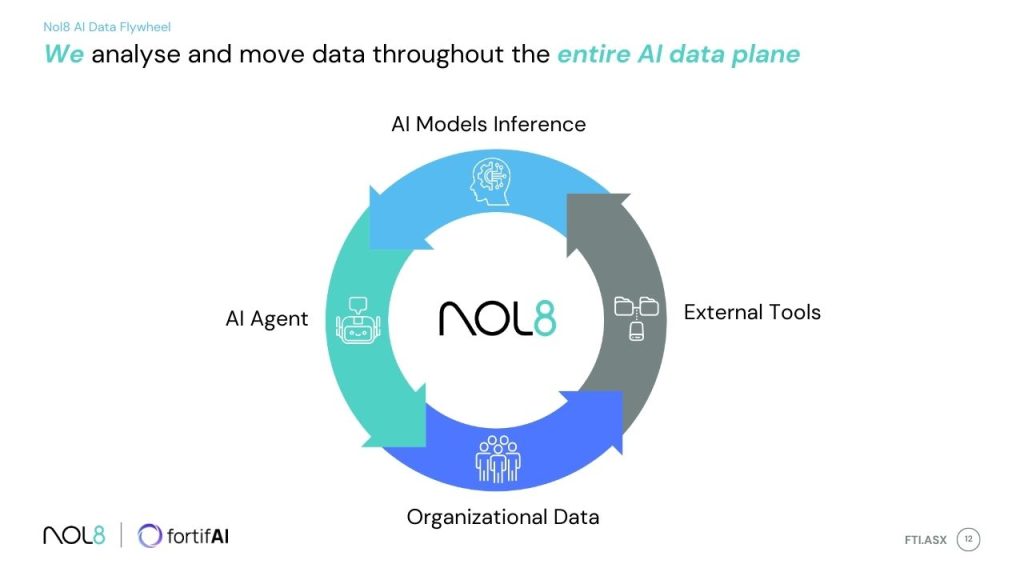

Data acceleration is emerging as critical infrastructure for modern AI and cloud environments because it changes the basic equation. Instead of collecting data, storing it, and then processing it later, the goal becomes acting on data as it arrives. Inline analysis. Real-time streaming. No batching. No waiting.

What makes this shift so compelling is that it can reduce infrastructure sprawl. Less complexity, fewer layers, lower power use, and often lower cost. In Australia, where energy efficiency is becoming a board-level issue for tech-heavy organisations, this matters more than ever.

The mindset I look for in this space

My approach is simple. I back ideas that improve performance and reduce complexity at the same time. Speed without stability is not a product. Scale without predictability is not progress.

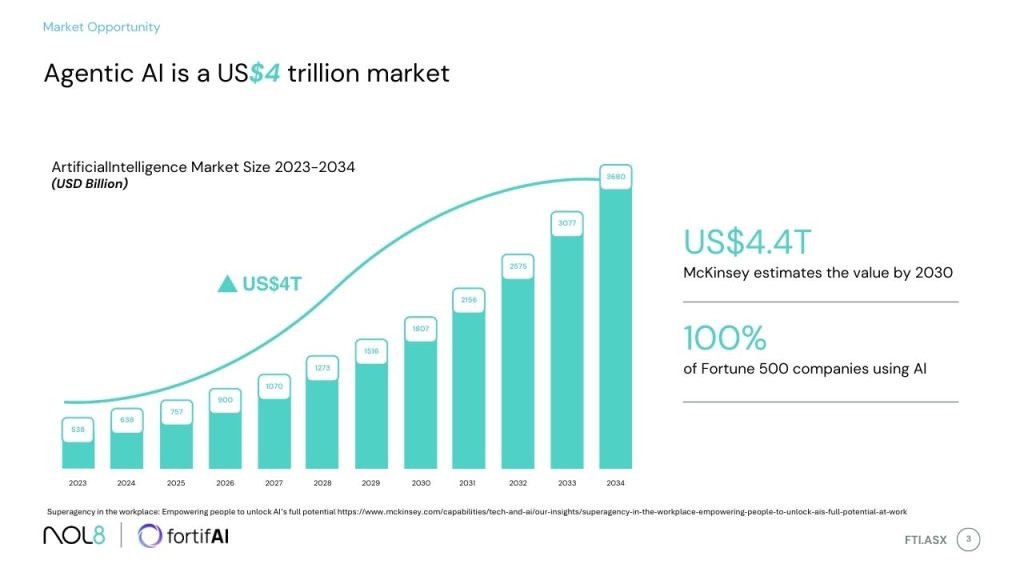

That is why I pay attention to platforms positioning themselves as a new paradigm in data processing, including technologies like NOL8, which focus on consistent millisecond-grade latency under extreme load, flexible deployment across cloud or on-premises, and a performance-first architecture built on published research.

What happens next

For this to become the standard, the market needs to reward outcomes, not legacy comfort. Procurement should value predictable latency and energy efficiency. Enterprises should demand evidence, stress testing, and clean integration pathways. Australia, in particular, will adopt what is proven, not what is loudly marketed.

In the coming years, the winners in AI will not only be those who build smarter models. They will be those who build faster, leaner, more reliable data systems beneath them.

View the presentation

Authorised for release by the Board of Fortifai Limited.

Media and Public Relations contact: Emily Walkerden, emily@nol8.io.